The Latest from Western Farm Press

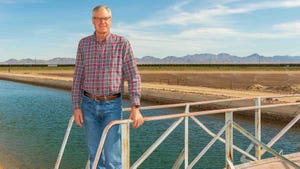

Klamath Project canal

Farm Policy

Klamath allocation falls short despite stormsKlamath allocation falls short despite storms

Irrigators express ‘deep disappointment’ over 2024 ag water announcement.

Market Overview

| Contract | Last | Change | High | Low | Open | Last Trade |

|---|---|---|---|---|---|---|

| Jul 24 Corn | 452.25 | -0.25 | 453 | 451.5 | 452.25 | 09:48 AM |

| Jul 24 Oats | 354.25 | unch — | 354.25 | 354.25 | 354.25 | 12:00 AM |

| May 24 Class III Milk | 18 | -0.13 | 18.07 | 17.89 | 18.07 | 04:12 AM |

| Jul 24 Soybean | 1180.25 | -1.75 | 1186 | 1180 | 1181 | 09:48 AM |

| Aug 24 Feeder Cattle | 259.4 | +0.925 | 260.625 | 257.675 | 258.5 | 06:04 PM |

| May 24 Ethanol Futures | 2.161 | unch — | 2.161 | 2.161 | 2.161 | 09:38 PM |

Copyright © 2019. All market data is provided by Barchart Solutions.

Futures: at least 10 minute delayed. Information is provided ‘as is’ and solely for informational purposes, not for trading purposes or advice.

To see all exchange delays and terms of use, please see disclaimer.

All Western Farm Press

Subscribe to receive top agriculture news

Be informed daily with these free e-newsletters